In 2026, the landscape of software development has shifted from “manual writing” to “AI orchestration.” With the release of Google’s Gemini 3 Flash and Open AI’s GPT-5.4, developers are no longer asking if AI can code—they are asking which model can manage their entire production codebase without breaking a sweat.

While GPT-5.4 is marketed as the pinnacle of “Deep Reasoning,” Gemini 3 Flash has emerged as the “Efficiency King.” This guide breaks down the benchmarks, context windows, and real-world performance to help you decide which model deserves a place in your IDE.

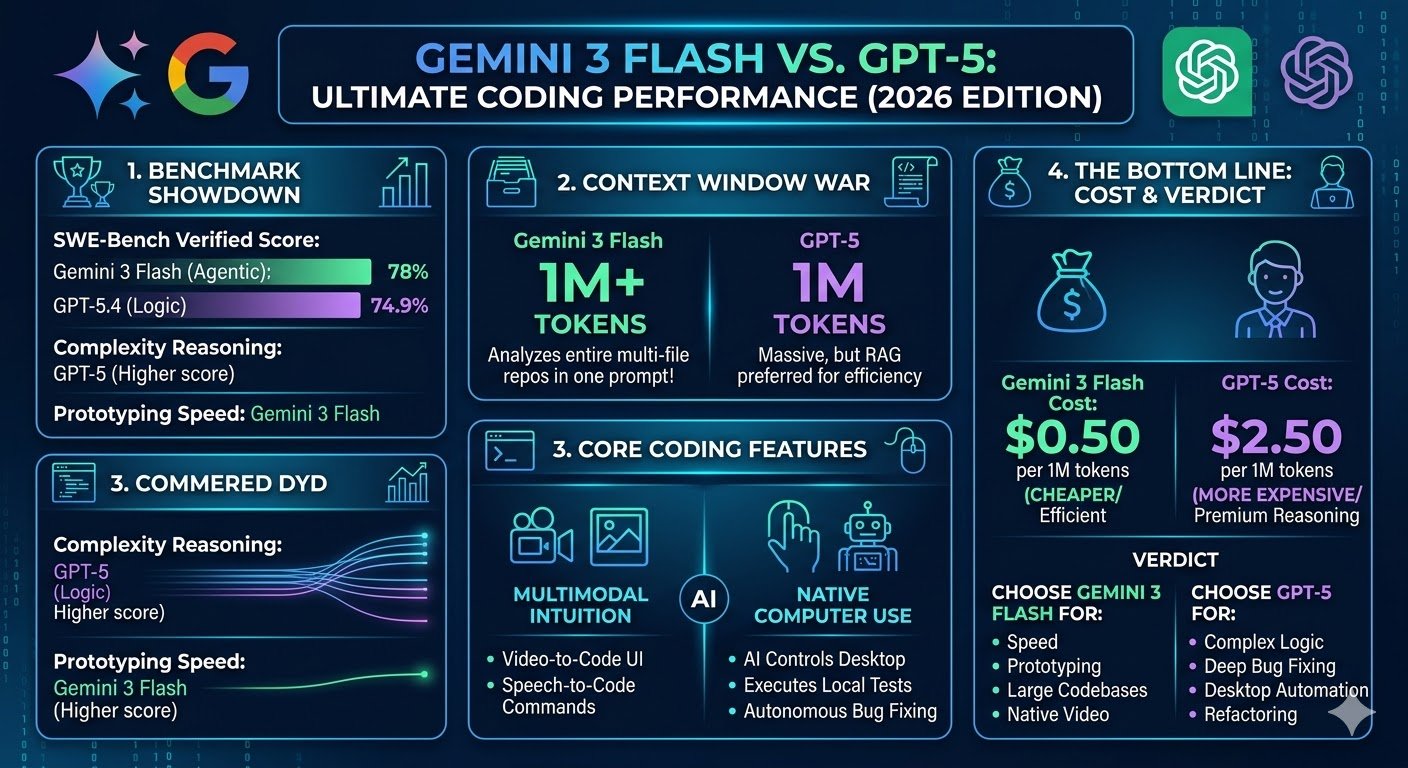

1. The Benchmark Showdown: Agentic Success Rates

In 2026, the industry standard for measuring coding AI is SWE-bench Verified. This benchmark tests an AI’s ability to resolve real-world GitHub issues autonomously.

SWE-bench Performance (Verified 2026)

-

Gemini 3 Flash: 78.0%

-

GPT-5.4 (High Reasoning): 74.9% – 80.0%

-

Claude 4.5 Opus: 80.9%

The Analysis: Surprisingly, Gemini 3 Flash matches (and sometimes exceeds) the flagship GPT-5.4 on verified bug-fixing tasks. While GPT-5.4 is superior at “SWE-bench Pro”—which involves complex, multi-file architectural changes—Gemini 3 Flash is significantly more reliable for standard feature implementation and debugging.

Verdict: If you need deep architectural “Thinking,” GPT-5 wins. For “Doing” and shipping features fast, Gemini 3 Flash is the smarter agent.

2. Context Window War: 1M vs. 2M Tokens

The biggest bottleneck for developers has always been context. How much of your project can the AI “see” at once?

The Comparison Table

| Feature | Gemini 3 Flash | GPT-5.4 |

| Input Context Window | 1,000,000 Tokens | 400,000 – 1,000,000 Tokens |

| Accuracy at Limit | 99.8% (Needle-in-Haystack) | 90% (Degrades after 256K) |

| Long-Context Price | Stable | Doubles after 272K Tokens |

| Best For | Massive Repositories | Snippets & Small Modules |

Why it matters: Gemini 3 Flash allows you to upload an entire medium-sized codebase (~50,000 lines) in a single prompt. GPT-5.4, while powerful, often requires “Prompt Caching” or RAG to maintain accuracy over long sessions, and its “Long-Context Surcharge” makes it much more expensive for large-scale refactoring.

3. Core Coding Features: Computer Use vs. Multimodality

The 2026 AI models are no longer just text predictors; they are multimodal operators.

GPT-5: Native Computer Use

OpenAI’s “Computer Use API” allows GPT-5 to see your desktop, move the mouse, and type into your terminal. It can literally run your tests, see the error in the console, and go back to VS Code to fix it. This makes it the ultimate tool for Autonomous QA and CI/CD automation.

Gemini 3 Flash: Multimodal Intuition

Google’s strength is in “Seeing and Hearing.” Gemini 3 Flash can process a 2-minute video of a UI bug or an architecture diagram on a whiteboard and turn it into functional React code instantly. For frontend developers and UI/UX designers, Gemini’s ability to understand visual context is unmatched.

4. API Economics: The 5x Pricing Gap

For professional developers and SaaS founders, intelligence must be affordable.

-

Gemini 3 Flash: ~$0.50 per 1M input tokens.

-

GPT-5.4: ~$2.50 per 1M input tokens.

Gemini 3 Flash is roughly 5x cheaper than GPT-5’s flagship tier. In a production environment where you are running hundreds of “Code Reviews” or “Unit Test Generations” per day, the cost savings with Gemini are astronomical.

5. Visualizing the Trade-off

To help you choose, look at this performance-to-speed ratio:

Plaintext

Performance Density (Intelligence / Time)

|

| [Gemini 3 Flash] (Instant, High Efficiency)

| *

| * | * [GPT-5.4] (Slower, Extreme Reasoning)

|___________________________________________________

Latency (Wait Time)

6. Frequently Asked Questions (FAQ)

1. Is Gemini 3 Flash really smarter for coding?

It depends on the task. For speed, context handling, and cost, Gemini 3 Flash is smarter. For complex, multi-layered logical puzzles, GPT-5.4 remains the leader.

2. Can I use Gemini 3 Flash for free?

Yes, Google AI Studio offers a generous free tier for Gemini 3 Flash, which is ideal for individual developers and prototyping.

3. Which model is better for Python and AI development?

GPT-5.4 has a slight edge in mathematical reasoning and data science logic, whereas Gemini 3 Flash is better for web frameworks and API integrations.

4. How does GPT-5’s “Thinking” mode affect coding?

GPT-5.4 allows you to toggle “Thinking Levels.” Setting it to “High” or “Extreme” ensures that the AI double-checks its logic before outputting code, reducing hallucinations by nearly 30% compared to GPT-4.

5. What is “Vibe Coding”?

Vibe Coding is a 2026 term for natural language programming. Because of its low latency and high context, Gemini 3 Flash is currently the favorite model for “Vibe Coders” using tools like Cursor or Windsurf.

Final Verdict: The 2026 Decision Matrix

-

Choose Gemini 3 Flash if: You work with huge codebases, need fast responses, are on a budget, or need to turn visual diagrams into code.

- Choose GPT-5.4 if: You need an AI agent to control your desktop, require the absolute highest reasoning for backend security, or need native DALL-E image generation for your assets.

- The Power Move: Most top-tier developers in 2026 use a Hybrid Workflow. They use Gemini 3 Flash for initial code generation and codebase analysis, then use GPT-5.4 for a final “Deep Reasoning” review to catch edge cases.